Free JavaScript Editor

Ajax Editor

Free JavaScript Editor

Ajax Editor

11.4. Bump MappingWe have already seen procedural shaders that modified color (brick, stripes) and opacity (lattice). Another whole class of interesting effects can be applied to a surface with a technique called BUMP MAPPING. Bump mapping involves modulating the surface normal before lighting is applied. We can perform the modulation algorithmically to apply a regular pattern; we can add noise to the components of a normal; or we can look up a perturbation value in a texture map. Bump mapping has proved to be an effective way of increasing the apparent realism of an object without increasing the geometric complexity. It can be used to simulate surface detail or surface irregularities. The technique does not truly alter the surface being shaded, it merely "tricks" the lighting calculations. Therefore, the "bumping" does not show up on the silhouette edges of an object. Imagine modeling a planet as a sphere and shading it with a bump map so that it appears to have mountains that are quite large relative to the diameter of the planet. Because nothing has been done to change the underlying geometry, which is perfectly round, the silhouette of the sphere always appears perfectly round, even if the mountains (bumps) go right up to the silhouette edge. In real life, you would expect the mountains on the silhouette edges to prevent the silhouette from looking perfectly round. For this reason, it is a good idea to use bump mapping to apply only "small" effects to a surface (at least relative to the size of the surface). Wrinkles on an orange, embossed logos, and pitted bricks are all good examples of things that can be successfully bump-mapped. Bump mapping adds apparent geometric complexity during fragment processing, so once again the key to the process is our fragment shader. This implies that the lighting operation must be performed by our fragment shader instead of by the vertex shader where it is often handled. Again, this points out one of the advantages of the programmability that is available through the OpenGL Shading Language. We are free to perform whatever operations are necessary, in either the vertex shader or the fragment shader. We don't need to be bound to the fixed functionality ideas of where things like lighting are performed. The key to bump mapping is that we need a valid surface normal at each fragment location, and we also need a light source and viewing direction vectors. If we have access to all these values in the fragment shader, we can procedurally perturb the normal prior to the light source calculation to produce the appearance of "bumps." In this case, we really are attempting to produce bumps or small spherical nodules on the surface being rendered. The light source computation is typically performed with dot products. For the result to have meaning, all the components of the light source calculation must be defined in the same coordinate space. So if we used the vertex shader to perform lighting, we would typically define light source positions or directions in eye coordinates and would transform incoming normals and vertex values into this space to do the calculation. However, the eye-coordinate system isn't necessarily the best choice for doing lighting in the fragment shader. We could normalize the direction to the light and the surface normal after transforming them to eye space and then pass them to the fragment shader as varying variables. However, the light direction vector would need to be renormalized after interpolation to get accurate results. Moreover, whatever method we use to compute the perturbation normal, it would need to be transformed into eye space and added to the surface normal; that vector would also need to be normalized. Without renormalization, the lighting artifacts would be quite noticeable. Performing these operations at every fragment might be reasonably costly in terms of performance. There is a better way. Let us look at another coordinate space called the SURFACE-LOCAL COORDINATE SPACE. This coordinate system varies over a rendered object, and it assumes that each point is at (0, 0, 0) and that the unperturbed surface normal at each point is (0, 0, 1). This would be a pretty convenient coordinate system in which to do our bump mapping calculations. But, to do our lighting computation, we need to make sure that our light direction, viewing direction, and the computed perturbed normal are all defined in the same coordinate system. If our perturbed normal is defined in surface-local coordinates, that means we need to transform our light direction and viewing direction into surface-local space as well. How is that accomplished? What we need is a transformation matrix that transforms each incoming vertex into surface-local coordinates (i.e., incoming vertex (x, y, z) is transformed to (0, 0, 0)). We need to construct this transformation matrix at each vertex. Then, at each vertex, we use the surface-local transformation matrix to transform both the light direction and the viewing direction. In this way, the surface local coordinates of the light direction and the viewing direction are computed at each vertex and interpolated across the primitive. At each fragment, we can use these values to perform our lighting calculation with the perturbed normal that we calculate. But we still haven't answered the real question. How do we create the transformation matrix that transforms from object coordinates to surface-local coordinates? An infinite number of transforms will transform a particular vertex to (0, 0, 0). To transform incoming vertex values, we need a way that gives consistent results as we interpolate between them. The solution is to require the application to send down one more attribute value for each vertex, a tangent value. Furthermore, we require the application to send us tangents that are consistently defined across the surface of the object. By definition, this tangent vector is in the plane of the surface being rendered and perpendicular to the incoming surface normal. If defined consistently across the object, it serves to orient consistently the coordinate system that we derive. If we perform a cross-product between the tangent vector and the surface normal, we get a third vector that is perpendicular to the other two. This third vector is called the binormal, and it's something that we can compute in our vertex shader. Together, these three vectors form an orthonormal basis, which is what we need to define the transformation from object coordinates into surface-local coordinates. Because this particular surface-local coordinate system is defined with a tangent vector as one of the basis vectors, this coordinate system is sometimes referred to as TANGENT SPACE. The transformation from object space to surface-local space is shown in Figure 11.5. We transform the object space vector (Ox, Oy, Oz) into surfacelocal space by multiplying it by a matrix that contains the tangent vector (Tx, Ty, Tz) in the first row, the binormal vector (Bx, By, Bz) in the second row, and the surface normal (Nx, Ny, Nz) in the third row. We can use this process to transform both the light direction vector and the viewing direction vector into surface-local coordinates. The transformed vectors are interpolated across the primitive, and the interpolated vectors are used in the fragment shader to compute the reflection with the procedurally perturbed normal. Figure 11.5. Transformation from object space to surface-local space

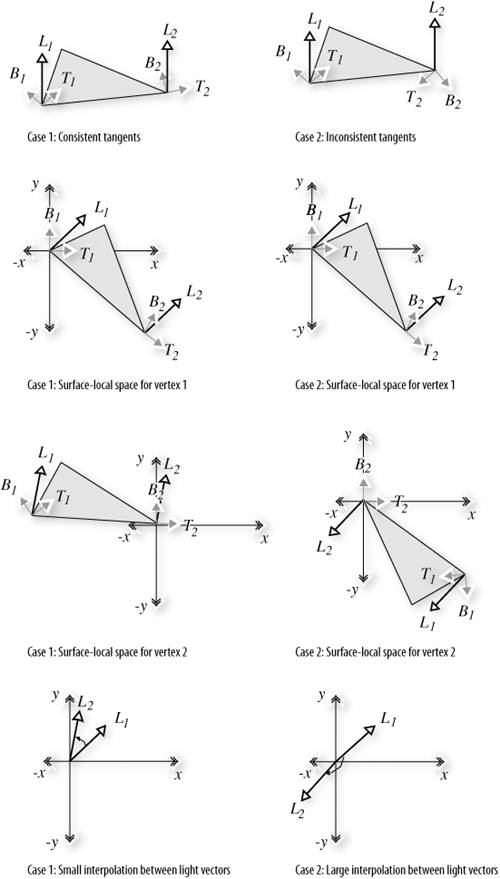

11.4.1. Application SetupFor our procedural bump map shader to work properly, the application must send a vertex position, a surface normal, and a tangent vector in the plane of the surface being rendered. The application passes the tangent vector as a generic vertex attribute, and binds the index of the generic attribute to be used to the vertex shader variable tangent by calling glBindAttribLocation. The application is also responsible for providing values for the uniform variables LightPosition, SurfaceColor, BumpDensity, BumpSize, and SpecularFactor. You must be careful to orient the tangent vectors consistently between vertices; otherwise, the transformation into surface-local coordinates will be inconsistent, and the lighting computation will yield unpredictable results. Consistent tangents can be computed algorithmically for mathematically defined surfaces. Consistent tangents for polygonal objects can be computed with neighboring vertices and by application of a consistent ordering with respect to the object's texture coordinates. The problem with inconsistently defined normals is illustrated in Figure 11.6. This diagram shows two triangles, one with consistently defined tangents and one with inconsistently defined tangents. The gray arrowheads indicate the tangent and binormal vectors (the surface normal is pointing straight out of the page). The white arrowheads indicate the direction toward the light source (in this case, a directional light source is illustrated). Figure 11.6. Inconsistently defined tangents can lead to large lighting errors

When we transform vertex 1 to surface-local coordinates, we get the same initial result in both cases. When we transform vertex 2, we get a large difference because the tangent vectors are very different between the two vertices. If tangents were defined consistently, this situation would not occur unless the surface had a high degree of curvature across this polygon. And if that were the case, we would really want to tessellate the geometry further to prevent this from happening. The result is that in case 1, our light direction vector is smoothly interpolated from the first vertex to the second and all the interpolated vectors are roughly the same length. If we normalize this light vector at each vertex, the interpolated vectors are very close to unit length as well. But in case 2, the interpolation causes vectors of wildly different lengths to be generated, some of them near zero. This causes severe artifacts in the lighting calculation. OpenGL does not have a defined vertex attribute for a tangent vector. The best choice is to use a generic vertex attribute to pass in the tangent value. We don't need to compute the binormal in the application; we have the vertex shader compute it automatically. The shaders described in the following section are descendants of the "bumpy/shiny" shader that John Kessenich and I developed for the SIGGRAPH 2002 course, State of the Art in Hardware Shading. 11.4.2. Vertex ShaderThe vertex shader for our procedural bump map shader is shown in Listing 11.7. This shader is responsible for computing the surface-local direction to the light and the surface-local direction to the eye. To do this, it accepts the incoming vertex position, surface normal, and tangent vector; computes the binormal; and transforms the eye space light direction and viewing direction, using the created surface-local transformation matrix. The texture coordinates are also passed on to the fragment shader because they are used to determine the position of our procedural bumps. Listing 11.7. Vertex shader for doing procedural bump mapping

11.4.3. Fragment ShaderThe fragment shader for doing procedural bump mapping is shown in Listing 11.8. A couple of the characteristics of the bump pattern are parameterized by being declared as uniform variables, namely, BumpDensity (how many bumps per unit area) and BumpSize (how wide each bump will be). Two of the general characteristics of the overall surface are also defined as uniform variables: SurfaceColor (base color of the surface) and SpecularFactor (specular reflectance property). The bumps that we compute are round. Because the texture coordinate is used to determine the positioning of the bumps, the first thing we do is multiply the incoming texture coordinate by the density value. This controls whether we see more or fewer bumps on the surface. Using the resulting grid, we compute a bump located in the center of each grid square. The components of the perturbation vector p are computed as the distance from the center of the bump in the x direction and the distance from the center of the bump in the y direction. (We only perturb the normal in the x and y directions. The z value for our perturbation normal is always 1.0.) We compute a "pseudodistance" d by squaring the components of p and summing them. (The real distance could be computed at the cost of doing another square root, but it's not really necessary if we consider BumpSize to be a relative value rather than an absolute value.) To perform a proper reflection calculation later on, we really need to normalize the perturbation normal. This normal must be a unit vector so that we can perform dot products and get accurate cosine values for use in the lighting computation. We normalize a vector by multiplying each component of the normal by 1.0 / sqrt(x2 + y2 + z2). Because of our computation for d, we've already computed part of what we need (i.e., x2 + y2). Furthermore, because we're not perturbing z at all, we know that z2 will always be 1.0. To minimize the computation, we just finish computing our normalization factor at this point in the shader by computing 1.0 / sqrt(d + 1.0). Next, we compare d to BumpSize to see if we're in a bump or not. If we're not, we set our perturbation vector to 0 and our normalization factor to 1.0. The lighting computation happens in the next few lines. We compute our normalized perturbation vector by multiplying through with the normalization factor f. The diffuse and specular reflection values are computed in the usual way, except that the interpolated surface-local coordinate light and view direction vectors are used. We get decent results without normalizing these two vectors as long as we don't have large differences in their values between vertices. Listing 11.8. Fragment shader for procedural bump mapping

The results from the procedural bump map shader are shown applied to two objects, a simple box and a torus, in Color Plate 15. The texture coordinates are used as the basis for positioning the bumps, and because the texture coordinates go from 0.0 to 1.0 four times around the diameter of the torus, the bumps look much closer together on that object. 11.4.4. Normal MapsIt is easy to modify our shader so that it obtains the normal perturbation values from a texture rather generating them procedurally. A texture that contains normal perturbation values for the purpose of bump mapping is called a BUMP MAP or a NORMAL MAP. An example of a normal map and the results applied to our simple box object are shown in Color Plate 16. Individual components for the normals can range from [1,1]. To be encoded into an RGB texture with 8 bits per component, they must be mapped into the range [0,1]. The normal map appears chalk blue because the default perturbation vector of (0,0,1) is encoded in the normal map as (0.5,0.5,1.0). The normal map could be stored in a floating-point texture. Today's graphics hardware supports textures with 16-bit floating-point values per color component and textures with 32-bit floating-point values per color component. If you use a floating-point texture format for storing normals, your image quality tends to increase (for instance, reducing banding effects in specular highlights). Of course, textures that are 16 bits per component require twice as much texture memory as 8-bit per component textures, and performance might be reduced. The vertex program is identical to the one described in Section 11.4.2. The fragment shader is almost the same, except that instead of computing the perturbed normal procedurally, the fragment shader obtains it from a normal map stored in texture memory. |

Ajax Editor

JavaScript Editor

Ajax Editor

JavaScript Editor